Strategic Impacts™ Framework > Strategic Impacts™ Series > Article 9 of 12

Part of the Strategic Impacts™ Framework Series by Sherri Monroe

New to this work? Begin with the The Strategic Impacts Framework: An Introduction | Reader’s Guide

Series Progress ●●●●●●●●●○○○

What We Measure Instead of What Changes

By Sherri Monroe

~7 min read | March 2026

When additive manufacturing begins to influence strategic conversations, measurement quickly follows.

Metrics are introduced to demonstrate progress, justify investment, and establish accountability. Utilization rates, part counts, cost comparisons, and lead-time reductions become familiar reference points. These measures are practical, accessible, and necessary. They make activity visible and comparable.

They do not, on their own, explain impact.

As organizations struggle to assess additive manufacturing maturity, measurement often fills the gap left by interpretation.

What can be counted is used as a proxy for what matters.

Over time, metrics become stand-ins for understanding, shaping not only how progress is reported, but how it is pursued.

This substitution is rarely intentional. It emerges because measurement feels objective where interpretation feels ambiguous.

Measurement in additive manufacturing programs has a structural problem, not a behavioral one. Organizations are not choosing the wrong metrics because of laziness or misalignment.

They are using the right metrics for the wrong system.

The metrics available to assess additive manufacturing were developed for activity-based production evaluation. When applied to a technology whose most significant effects are structural rather than output-based, they yield results that are accurate, comparable, and systematically misleading.

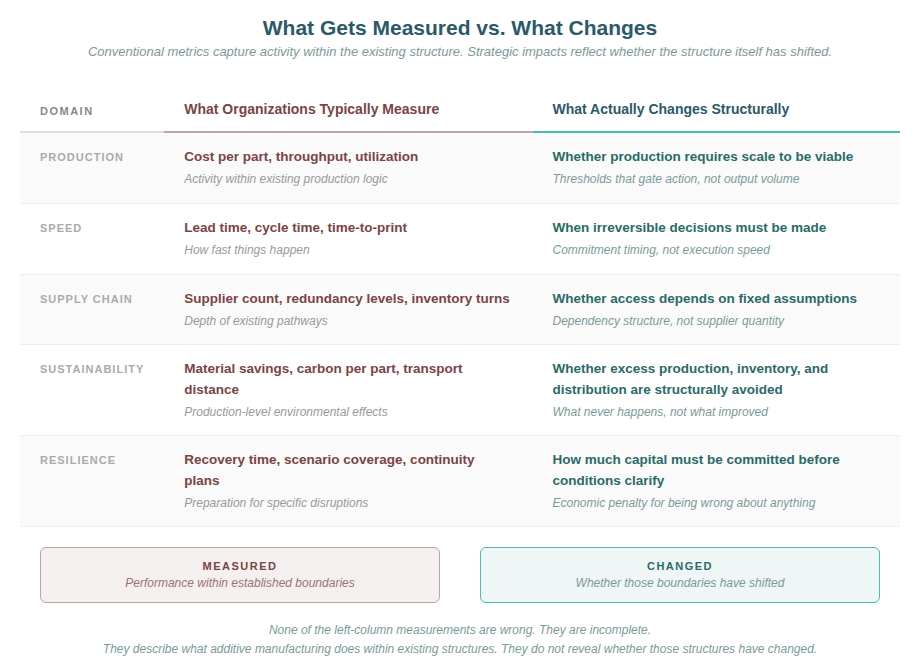

What organizations measure vs. what changes

When Measurement Substitutes for Understanding

Additive manufacturing lends itself to quantification. Machines run or sit idle. Parts are produced or not. Costs are higher or lower relative to alternatives. These indicators are useful within operational contexts, but they do not capture how the technology influences decision-making beyond execution.

When metrics are applied as evidence of strategic impact, additive manufacturing is evaluated primarily by activity and efficiency. The question becomes how much is being produced, how quickly, and at what cost. What is often left unexamined is whether the presence of additive manufacturing changes how organizations prepare, plan, or allocate risk —whether those parts needed to be produced at all.

This gap helps explain why additive manufacturing can appear successful while remaining strategically marginal.

Within the Strategic Impacts™, what matters is not how much additive manufacturing is used, but how it alters readiness, availability, efficiency, and resilience as organizational conditions. These impacts do not always correlate neatly with utilization or output. They are strongest where additive manufacturing activity is least visible.

This creates tension.

Metrics reward production. Strategic impact often appears in the absence of production—in decisions deferred, inventory avoided, or commitments not made. These effects are difficult to count, and therefore easy to overlook—or intentionally exclude.

When asked how inventory reduction, avoided obsolescence, or deferred capital commitments factored into their additive manufacturing evaluation, one manufacturer’s response was direct: those things are hard to calculate. They are. That difficulty is not a reason to ignore them. It is the reason additive manufacturing’s most significant effects remain invisible in the assessments designed to justify it.

This misalignment has a specific origin. The metrics most commonly applied to additive manufacturing—cost per part, throughput, machine utilization—were developed to optimize systems where scale is a prerequisite and tooling drives economics. They are not neutral measures.

They are artifacts of conventional manufacturing’s constraint structure.

When additive manufacturing is evaluated against those metrics, it is being asked to demonstrate proficiency at the constraints it was designed to change. Organizations that recognize this measure differently—not whether additive manufacturing matches conventional performance on conventional terms, but whether the assumptions behind those terms still apply.

A major equipment manufacturer promises spare parts for legacy equipment regardless of age—a powerful brand commitment. When a customer needs a single part for a decades-old implement, the manufacturer orders the supplier minimum—often one hundred units. One is sold. Ninety-nine are scrapped immediately because inventorying a rarely requested part costs more than discarding it. In the cost comparison that follows, the additively manufactured alternative is measured against the unit price of one—not the actual cost of one hundred. The conventional part appears cheaper. The measurement made it so.

What Gets Counted Instead

A familiar pattern follows.

Organizations track additive manufacturing through measures that reflect performance within existing structures. Parts per machine. Cost per unit. Time to print. These metrics reinforce a view of additive manufacturing as an alternative production method rather than a structural influence.

In parallel, additive manufacturing may be credited for responsiveness during disruptions or accelerations. Emergency builds, expedited replacements, or rapid redesigns are highlighted as evidence of value. While these moments are real, they can distort perception. The technology’s most visible contributions become exceptional events rather than everyday influence.

Another pattern emerges in sustainability reporting.

Additive manufacturing is associated with specific environmental metrics—material savings, reduced transport, or localized production. These measures are meaningful, but they remain isolated. They capture improvements in particular cases without revealing whether underlying systems have changed. Sustainability is measured as an outcome rather than examined as a consequence of structure.

None of these measurements are wrong. They are incomplete.

They describe what additive manufacturing does within established boundaries. They do not ask whether additive manufacturing has moved them.

This limitation becomes more consequential as organizations attempt to position additive manufacturing strategically.

When metrics dominate evaluation, additive manufacturing is confined where it is most measurable—within operations, engineering, or pilot programs.

Its influence on planning horizons, sourcing assumptions, or risk posture remains indirect. Decisions that shape readiness, availability, efficiency, and resilience continue to be made as if additive manufacturing were peripheral, not even part of the strategic equation.

Over time, this misalignment reinforces itself.

Teams optimize against the metrics they are given.

Leaders interpret progress through the indicators they receive.

Additive manufacturing becomes proficient at demonstrating activity while struggling to demonstrate influence.

The engineering team presents a case showing additive manufacturing reduced lead time from fourteen weeks to three days and eliminated a six-part assembly. The executive asks what the cost per part was, finds it higher, and tables the discussion. Both leave the meeting frustrated—and both are measuring accurately. They are measuring different things.

This is where conversations stall.

Why Resilience Resists Measurement

Resilience resists measurement for reasons examined in detail earlier in this series—it manifests as what does not happen. The more practical question is what organizations measure instead, and why those proxies miss the phenomenon.

Organizations with high Resilience report observations like these:

Our working capital requirements decreased even as revenue grew.

We can change direction without massive write-downs.

Forecast errors don’t create the same financial damage they once did.

These are structural changes in economic exposure—but they rarely translate to dashboard metrics.

Organizations attempting to measure Resilience directly often default to proxies that miss the phenomenon:

Inventory turns measure how efficiently existing inventory moves, not whether less inventory is required structurally.

Cash-to-cash cycle time measures how quickly capital flows through operations, not whether less capital must be committed in the first place.

Risk mitigation metrics measure preparation for specific scenarios, not economic capacity to adapt to any scenario that actually occurs.

What reveals Resilience more accurately:

Tracking capital commitment timelines (when irreversible decisions must be made)

Measuring financial exposure to forecast error (cost of being wrong by X%)

Assessing stranded capital events (write-downs from obsolete inventory or abandoned tooling)

Evaluating opportunity cost of locked capital (strategic options foregone because resources were committed)

These measurements are less precise than conventional metrics. They require interpretation. They become visible primarily in hindsight—when demand shifted, when strategies pivoted, when organizations with Resilience adapted at lower cost than those without it.

This is why Resilience is chronically undervalued. What cannot be measured on quarterly dashboards tends not to be recognized at all—even when it determines whether strategic adaptation is economically feasible.

Organizations that understand this measure differently. They track whether economic structure has changed, not whether recovery from specific disruptions has accelerated. They assess capital commitment patterns, not disaster response capabilities. They recognize that Resilience operates before disruption occurs—in the financial flexibility that determines how much change costs when it becomes necessary.

The Strategic Impacts provide a way out of this loop.

They do not replace measurement, but they reframe its purpose. Instead of asking what additive manufacturing delivers, they ask what it changes. Instead of optimizing for visible output, they direct attention to shifts in preparation, access, structural efficiency, and economic adaptability.

This reframing does not eliminate the need for metrics. It changes which questions metrics are meant to answer.

Understanding what is being measured—and what is being missed—is a necessary step toward aligning additive manufacturing activity with its strategic impact. Without that alignment, organizations risk refining their measurements while leaving their assumptions untouched.

Rather than tracking utilization, organizations might observe:

How often late-stage design changes occur without penalty

How frequently minimum order quantities prevent action

How much inventory exists to buffer against uncertainty that additive manufacturing could absorb

Measurement, like maturity, becomes meaningful only when it reflects integration rather than activity.

Terms Used in This Article

- Maturity — the degree to which AM changes organizational assumptions, not capability advancement

- Condition — a state present in the organization whether or not named or measured

- Resilience — economic capacity to adapt without disproportionate financial penalty

- Readiness — organizational preparedness without premature commitment